Introduction

The world of AI is rapidly evolving, and with the advent of powerful models like Codestral 22B, the possibilities for code generation and natural language processing are expanding. However, running these models efficiently requires careful consideration of hardware resources, particularly when it comes to GPU memory (VRAM).

Then, You cannot miss out Anakin AI!

Anakin AI is an all-in-one platform for all your workflow automation, create powerful AI App with an easy-to-use No Code App Builder, with Llama 3, Claude, GPT-4, Uncensored LLMs, Stable Diffusion...

Build Your Dream AI App within minutes, not weeks with Anakin AI!

Can I Run Codestral 22B Locally?

In a recent comprehensive lab test, the performance of Codestral 22B was put to the test using a budget-friendly setup consisting of two Nvidia RTX 4060 Ti GPUs, each equipped with 16GB of GDDR6 memory. This configuration provides a total of 32GB VRAM, which was once considered an unusual amount for consumer-grade systems. The test aimed to answer crucial questions about the impact of context size on VRAM usage, the effects of different quantization levels (Q4, Q6, and Q8) on throughput (t/s), power consumption, and more.

To further explore the viability of affordable high-VRAM builds, it's worth examining the memory bandwidth of various budget GPUs. The table below presents a comparison of several models:

| GPU Model | Architecture | Memory Size | Memory Type | Memory Bandwidth | Power Consumption (TDP) |

|---|---|---|---|---|---|

| Nvidia A4000 | Ampere | 16 GB GDDR6 | GDDR6 | 448 GB/s | 140 W |

| Nvidia RTX 4060 Ti 16GB | Ada Lovelace | 16 GB GDDR6 | GDDR6 | 288 GB/s | 160 W |

| Nvidia RTX 3060 | Ampere | 12 GB GDDR6 | GDDR6 | 360 GB/s | 170 W |

| Nvidia Quadro P5000 | Pascal | 16 GB GDDR5X | GDDR5X | 288 GB/s | 180 W |

| Nvidia Quadro RTX 5000 | Turing | 16 GB GDDR6 | GDDR6 | 448 GB/s | 230 W |

| Nvidia Quadro P6000 | Pascal | 24 GB GDDR5X | GDDR5X | 432 GB/s | 250 W |

| Nvidia Titan X | Pascal | 12 GB GDDR5X | GDDR5X | 480 GB/s | 250 W |

| Nvidia Tesla P40 | Pascal | 24 GB GDDR5X | GDDR5X | 346 GB/s | 250 W |

While the RTX 3090, with its impressive 24GB VRAM and double the memory bandwidth of the fastest card listed, is a top performer, its high cost may be prohibitive for many users. The data suggests that more affordable options, such as the RTX 4060 Ti with 16GB VRAM, could provide a compelling balance of performance and cost-effectiveness.

In the following sections, we will examine the key findings of the lab test, discuss the implications for real-world applications, and explore the potential of affordable, high-VRAM GPU builds

Running Codestral 22B Locally with Ollama

Ollama is an open-source platform that allows you to run and interact with various AI models, including the Codestral 22B, a cutting-edge AI model for coding tasks developed by Mistral AI. This powerful model has been trained on a massive dataset of code and natural language.

Step 1: Install Ollama

Before you can run the Codestral 22B, you need to install Ollama on your system. Ollama is available for various operating systems, including Windows, macOS, and Linux. You can find the installation instructions and download links on the official Ollama website (https://ollama.com).

For example, on Linux, you can install Ollama with a single command:

curl -fsSL https://ollama.com/install.sh | sh

Once you have downloaded the appropriate package for your system, follow the installation instructions provided. This process typically involves extracting the package and running the installation script.

Step 2: Download the Codestral 22B Model

After installing Ollama, you need to download the Codestral 22B model. This model is available on the Hugging Face platform, a popular repository for AI models and datasets. You can find the Codestral 22B model at the following link: https://huggingface.co/mistralai/Codestral-22B-v0.1

To download the model, you can use the Ollama command-line interface (CLI). Open your terminal or command prompt and navigate to the directory where you want to store the model. Then, run the following command:

ollama pull codestral

This command will download the necessary files from the Hugging Face repository and create a local copy of the Codestral 22B model.

Step 3: Run the Codestral 22B Model

Once the model has been downloaded, you can run it using the Ollama CLI. In your terminal or command prompt, navigate to the directory where you installed Ollama and run the following command:

ollama run codestral

This command will start the Codestral 22B model and open an interactive session where you can provide prompts and receive responses.

Example usage:

Human: Write a Python function to calculate the factorial of a given number.

Assistant: Here's a Python function to calculate the factorial of a given number:

def factorial(n):

"""

Calculates the factorial of a given number.

Args:

n (int): The number for which to calculate the factorial.

Returns:

int: The factorial of the given number.

"""

if n == 0:

return 1

else:

return n * factorial(n-1)

# Example usage

print(factorial(5)) # Output: 120

In this example, the Codestral 22B model generates a Python function that calculates the factorial of a given number using recursion. The function includes a docstring that explains its purpose, arguments, and return value.

Building an AI App with Anakin AI

Anakin AI is a no-code platform that allows you to create AI applications without writing a single line of code. It provides a user-friendly interface and a wide range of pre-built AI models, making it accessible to users with varying levels of technical expertise.

Step 1: Sign Up for Anakin AI

To get started with Anakin AI, you need to create an account on their website (https://www.anakin.ai). The sign-up process is straightforward and requires you to provide basic information such as your name, email address, and a password.

Step 2: Explore Pre-built AI Apps

Once you've signed up and logged in, you'll be taken to your personal workspace. Anakin AI offers a wide range of pre-built AI apps that you can explore and use immediately. These apps cover various domains, including content generation, image processing, data analysis, and more.

To find pre-built apps, click on the "Discover" button in the top navigation bar. This will take you to a library of available apps, which you can filter based on categories or search for specific functionalities.

Step 3: Create a New AI App

If you can't find a pre-built app that meets your needs, you can create a new AI app from scratch. In your personal workspace, click on the "Create App" button and select the type of app you want to build.

Anakin AI offers three main types of apps:

- Quick App: This type of app is designed for generating text content, such as blog posts, translations, or creative writing.

- Chatbot App: As the name suggests, this app allows you to create chatbots that can engage in natural conversations with users.

- Workflow App: Workflow apps are more complex and allow you to combine multiple AI models and functionalities into a single application.

For this example, let's create a Quick App.

Step 4: Customize Your AI App

After selecting the app type, you'll be taken to the app builder interface. Here, you can customize various aspects of your app, such as the input fields, the AI model to be used, and the output format.

For a Quick App, you'll typically define the following:

- Input Fields: These are the fields where users will provide the necessary information for the app to generate content. For example, if you're building an app to generate blog post ideas, you might have input fields for the topic, target audience, and desired tone.

- AI Model: Anakin AI offers a range of AI models from different providers, such as OpenAI, Anthropic, and Google. You can select the model that best suits your app's requirements.

- Prompt Template: This is the template that the AI model will use to generate content based on the user's input. You can customize the prompt template to ensure that the generated content meets your specific needs.

- Output Format: Finally, you can specify the format in which the generated content should be presented, such as plain text, Markdown, or HTML.

Once you've customized your app, you can test it by providing sample input and generating content. If you're satisfied with the results, you can save your app and make it available for others to use.

Step 5: Integrate Anakin AI's API (Optional)

Anakin AI offers comprehensive API services for all applications, empowering developers and organizations to seamlessly integrate and enhance their projects using Anakin AI's APIs.

By leveraging these APIs, users gain the flexibility to easily access Anakin AI's robust product features within their own applications. This capability allows developers and organizations to meet their specific customization requirements without the hassle of managing complex backend architecture and deployment processes. As a result, development costs and workload are significantly reduced, providing unparalleled convenience for developers.

Advantages of API Integration

- Rapidly develop AI applications tailored to your business needs using Anakin AI's intuitive visual interface, with real-time implementation across all clients.

- Support for multiple AI model providers, allowing you the flexibility to switch providers as needed.

- Pre-packaged access to the essential functionalities of the AI model.

- Stay ahead of the curve with upcoming advanced features available through the API.

How to Use Anakin AI with Codestral 22B

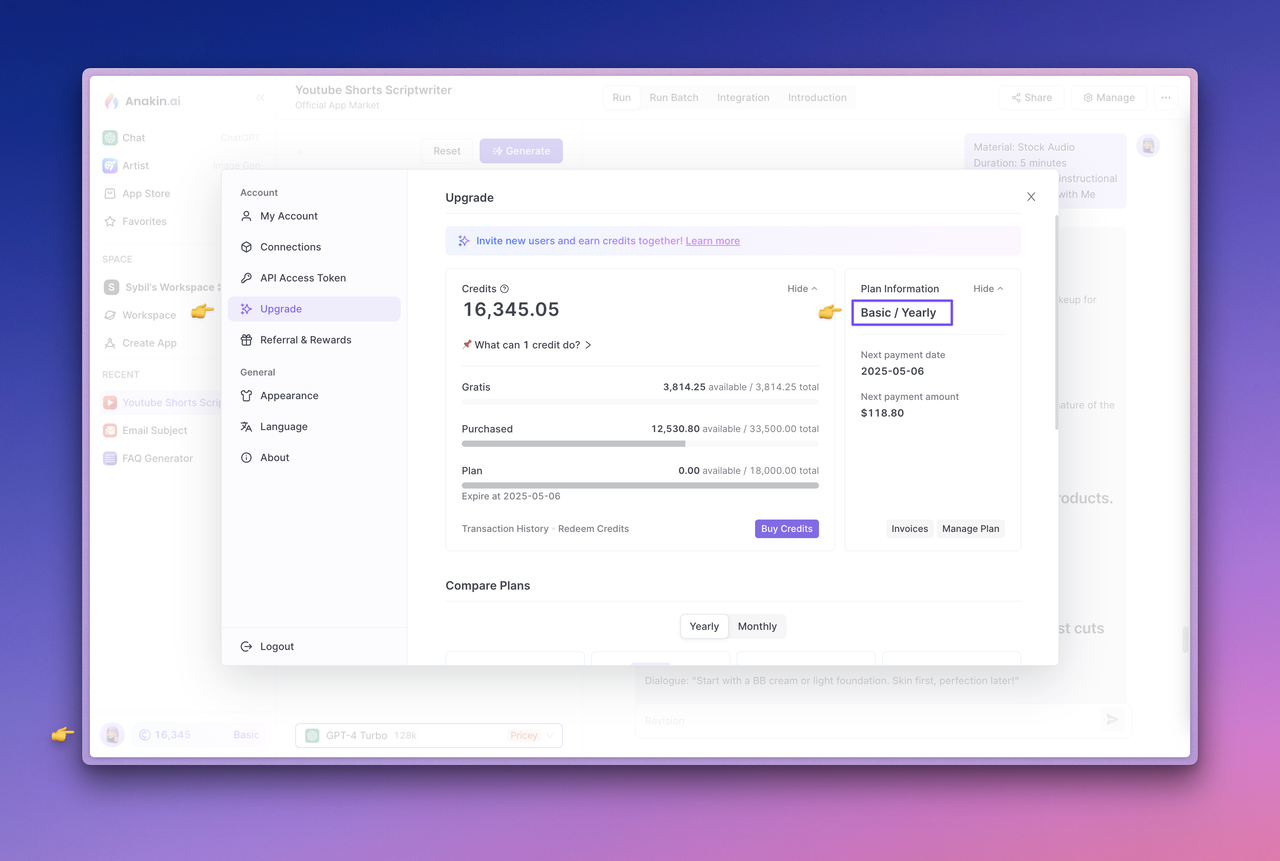

Upgrade Your Plan and Check Your Account Credits: Anakin AI's API service is currently exclusively available to subscribers. While using the AI model through API calls, credits from your account balance will be consumed. To check your subscription status or upgrade your plan, navigate to the Anakin AI Web App, click on the avatar located in the lower-left corner, and access the Upgrade page. Please ensure that your current account has sufficient credits.

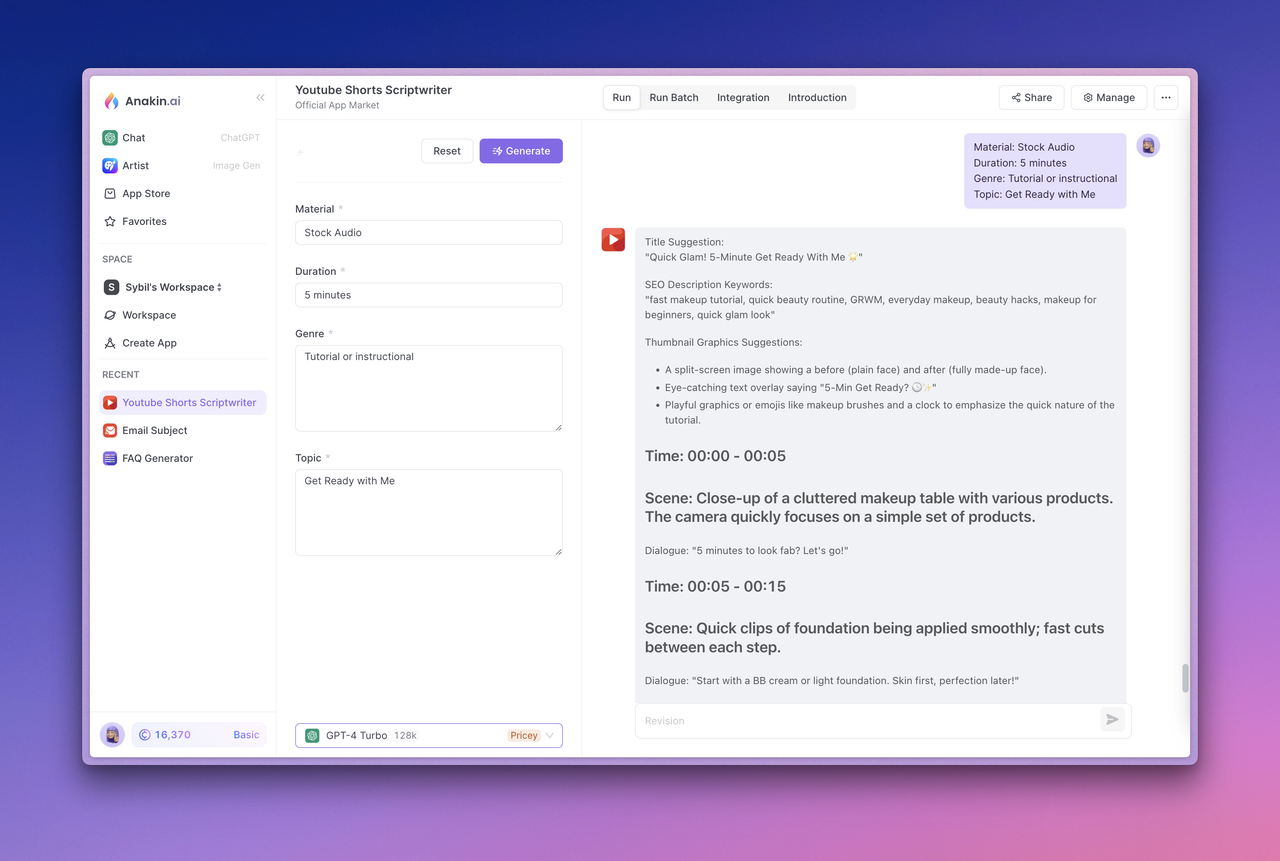

Test Your App: Now, to test the app, select the app and click the "Generate" button. Confirm it runs properly and produces the expected output before proceeding.

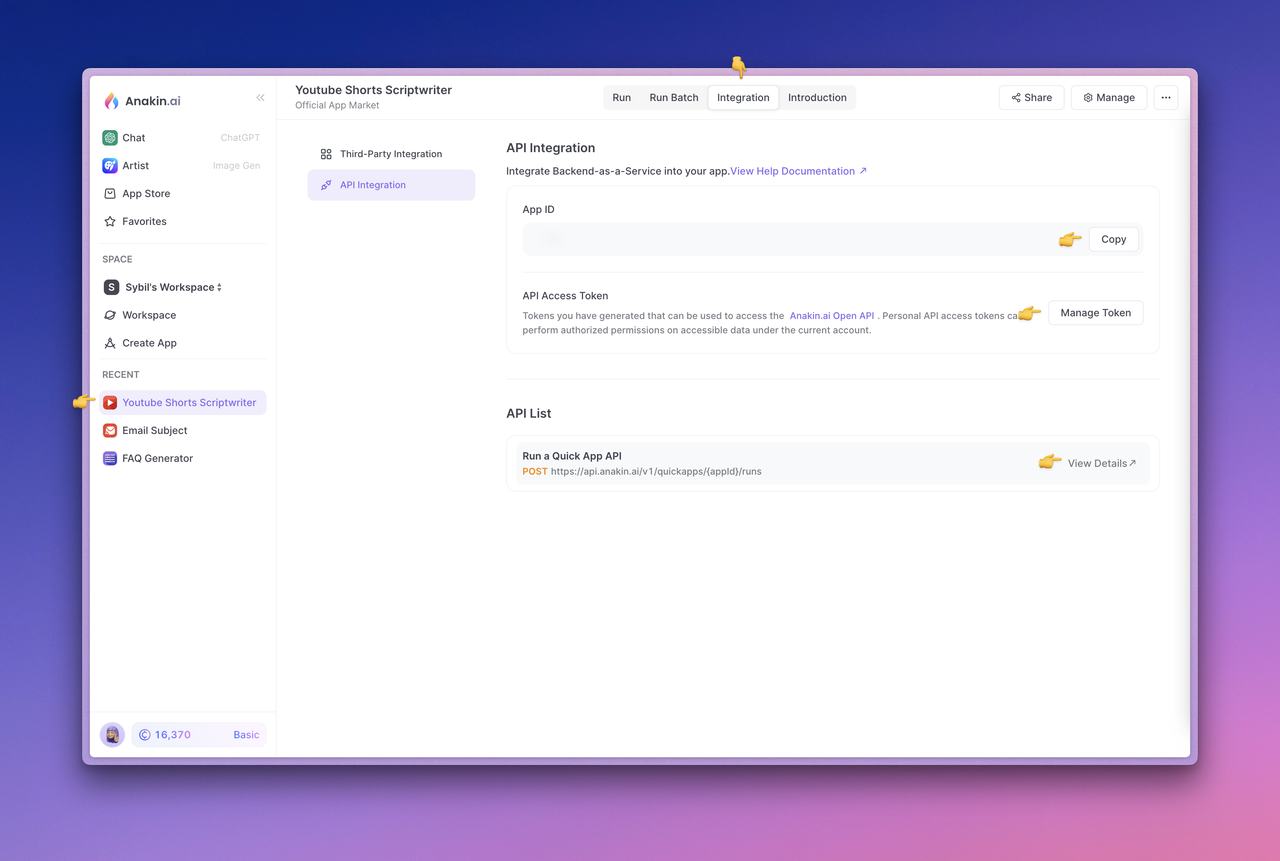

View API Documentation and Manage API Access Tokens: Next, visit the app Integration section at the top. In this section, you can click "View Details" to view the API documentation provided by Anakin AI, manage access tokens, and view the App ID.

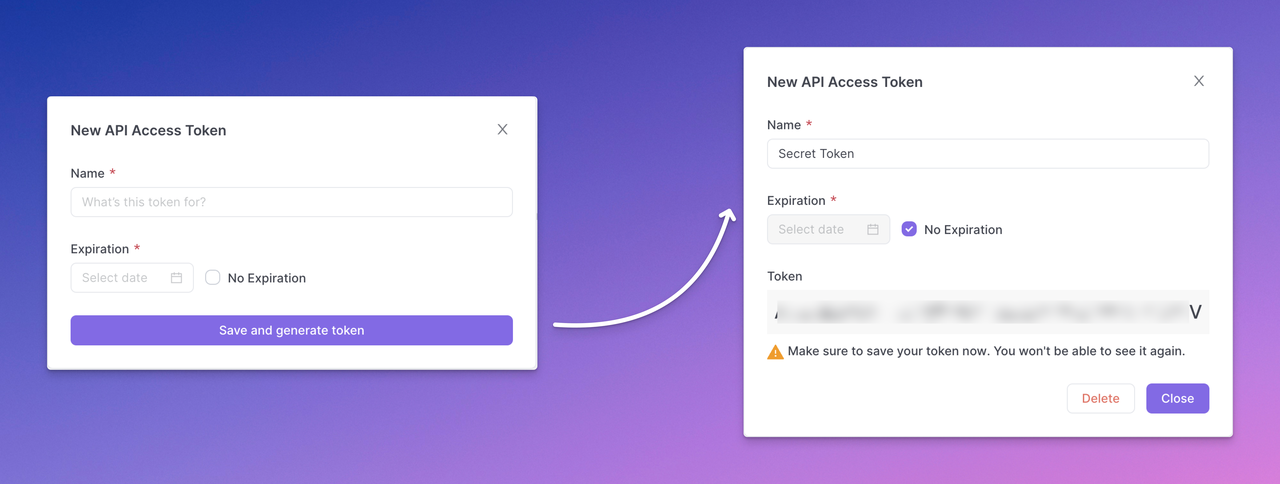

Generate Your API Access Token: Click the "Manage Token" button to manage your API Access token and select "New Token" to generate your API access token. Complete the token configuration, then click "Save and generate token," and finally, copy and save the API Access Token securely.

Note that the generated API access token will only be displayed once, so make sure to copy and save it securely right away. It's a best practice to expose API keys in plaintext through backend calls rather than directly in frontend code or requests to prevent abuse or attacks on your app. You can create multiple API Access Tokens for an app to distribute it among different users or developers, ensuring that while users of the API can access the AI capabilities provided by the app developer, the underlying Prompt engineering and other tool capabilities remain intact.

Quick App API Example

A Quick App allows you to generate high-quality text content such as blog posts, translations, and other creative content. By calling the "Run a Quick App" API, the user input content is sent, and the generated text result is obtained.

The model parameters and prompt template used to generate text depend on the input settings in the Anakin AI App -> Manage -> Design Page.

You can find the API documentation and request examples for the app in App -> Integration -> API List -> View Details.

Here's an API call example to create text completion information:

curl --location --request POST 'https://api.anakin.ai/v1/quickapps/{{appId}}/runs' \

--header 'Authorization: Bearer ANAKINAI_API_ACCESS_TOKEN' \

--header 'X-Anakin-Api-Version: 2024-05-06' \

--header 'Content-Type: application/json' \

--data-raw '{

"inputs": {

"Product/Service": "Cloud Service",

"Features": "Reliability and performance.",

"Advantages": "Efficiency",

"Framework": "Attention-Interest-Desire-Action"

},

"stream": true

}'

Chatbot App API Example

A Chatbot App lets you create chatbots that interact with users in a natural, question-and-answer format. To start a conversation, call the "Conversation with Chatbot" API, and continue to pass in the returned parameter name to maintain the conversation.

You can find the API documentation and sample requests for the app in App -> Integration -> API List -> View Details.

Here's an API call example to send conversation messages:

curl --location --request POST 'https://api.anakin.ai/v1/chatbots/{{appId}}/messages' \

--header 'Authorization: Bearer ANAKINAI_API_ACCESS_TOKEN' \

--header 'X-Anakin-Api-Version: 2024-05-06' \

--header 'Content-Type: application/json' \

--data-raw '{

"content": "What's your name? Are you the clever one?",

"stream": true

}'

By integrating Anakin AI's APIs into your applications, you can leverage the power of AI models and functionalities while maintaining control over the user experience and customization options.

Conclusion

In this comprehensive guide, you've learned how to run the Codestral 22B model using Ollama, a powerful tool for interacting with AI models, and how to build AI applications using Anakin AI, a no-code platform that simplifies the process of creating and deploying AI solutions.

By following the steps outlined in this article, you can harness the capabilities of the Codestral 22B model for coding tasks and leverage Anakin AI to create customized AI apps tailored to your specific needs. Whether you're a developer, entrepreneur, or simply an AI enthusiast, these tools provide a seamless and accessible way to explore the vast potential of artificial intelligence.

Citations:

[1] https://ppl-ai-file-upload.s3.amazonaws.com/web/direct-files/6987700/746022a6-8311-46fa-9fbc-1b22763a6ef6/paste.txt

[2] https://ppl-ai-file-upload.s3.amazonaws.com/web/direct-files/6987700/c1da2cf5-5a8c-4b2e-bfa7-8aa539ee8062/paste.txt

[3] https://github.com/ollama/ollama

[4] https://ollama.com/download

[5] https://www.ollama.com

[6] https://huggingface.co/mistralai/Codestral-22B-v0.1

[7] https://huggingface.co/bartowski/Codestral-22B-v0.1-GGUF

[8] https://mistral.ai/news/codestral/

[9] https://www.youtube.com/watch?v=XGc0Q3tJ7s0

[10] https://ollama.com/blog/continue-code-assistant

[11] https://github.com/ollama/ollama/issues/4706

[12] https://www.jammable.com/anakinskywalker

[13] https://www.eventbrite.com/e/anakinai-promo-code-anakinai-sign-up-now-tickets-892090535807

[14] https://www.aitoolsclub.com/anakin-ai/

[15] https://scrapbox.io/anakin-ai/How_to_Create_AI_Apps_with_No_Code_Effortlessly

[16] https://www.futurepedia.io/tool/anakinai

[17] https://digitaltools.io/ai/anakin-ai/

[18] https://www.youtube.com/watch?v=o_iO-uCuD_I

[19] https://www.youtube.com/watch?v=lK8xsrLhL_E

[20] https://www.youtube.com/watch?v=UkfnC2kT_so