The landscape of conversational AI has been profoundly transformed with the advent of OpenChat-3.5-1210, a model that promises to be more than just an incremental update.

This latest iteration stands as a testament to the relentless pursuit of excellence in the realm of Language Learning Models (LLMs). Where once ChatGPT and Grok models were the benchmarks, there now looms a formidable new contender. OpenChat-3.5-1210 doesn't merely edge past its predecessors; it redefines the benchmarks by which LLMs are judged.

Article Keypoints:

You can test out the OpenChat-3.5-1210 Model with Anakin AI online.

What is OpenChat?

OpenChat is a Language Learning Model (LLM) known for its remarkable coding capabilities and generalist approach.

This model, even at a size of 7B parameters, showcases an extraordinary ability to deliver high-quality performance in complex language tasks, which gives it a much better benchmark than ChatGPT and Grok

- Enhanced Code Generation: The remarkable leap in the HumanEval benchmark underscores the model’s improved proficiency in code understanding and generation—akin to a craftsman achieving a new level of mastery in their art.

- Strategic Fine-Tuning: Employing C-RLFT, a technique drawn from offline reinforcement learning, allows OpenChat to learn effectively from mixed-quality datasets without the need for explicit preference labels.

- Generalist Knowledge: OpenChat-3.5-1210's excellence is not confined to coding. It shines across a spectrum of benchmarks like MMLU, TruthfulQA, and AGIEval, showcasing a versatility that characterizes a truly generalist AI model.

OpenChat 3.5-1210 is a testament to the advancements in AI, delivering a level of accessibility and performance that propels the capabilities of open-source language models forward.

How to Try OpenChat-3.4-1210 Online

One of the easiest ways to run OpenChat is using the API provided by Anakin AI:

Anakin AI is not just an alternative; it's a gateway to a diverse array of AI models, each with unique capabilities and advantages. Imagine having the power to tailor your AI experience, selecting from a suite of models to perfectly align with your project's needs.

Here are the other Open Source and Free Models that Anakin AI supports:

- GPT-4: Boasting an impressive context window of up to 128k, this model takes deep learning to new heights.

- Claude-2.1 and Claude Instant: These variants provide nuanced understanding and responses, tailored to different interaction speeds.

- Google Gemini Pro: A model designed for precision and depth in information retrieval.

- Mistral 7B and Mixtral 8x7B: Specialized models that offer a blend of generative capabilities and scale.

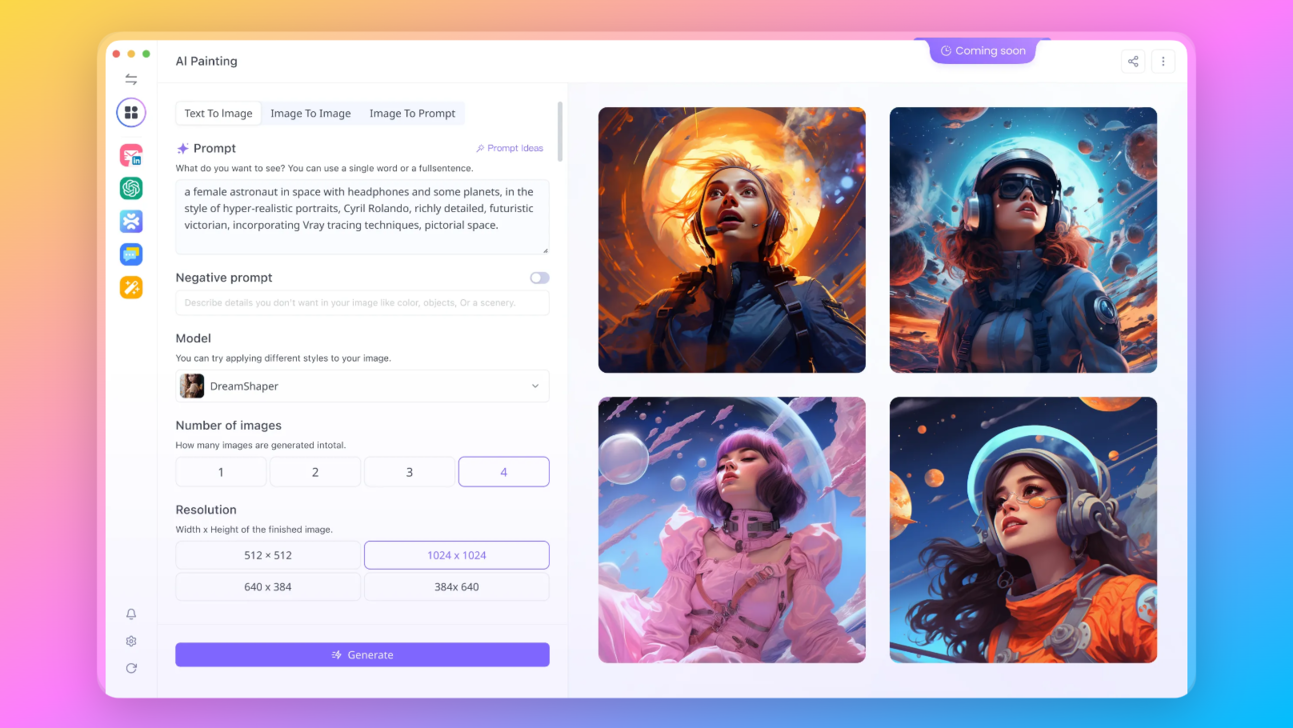

Your vision isn't limited to text, and neither should your AI be. With Anakin AI, you gain access to state-of-the-art image generation models such as:

- DALLE 3: Create stunning, high-resolution images from textual descriptions.

- Stable Diffusion: Generate images with a unique artistic flair, perfect for creative projects.

Interested in trying Open Chat-3.5-1210? Try it now at Anakin.AI!

How Good is OpenChat 3.5 1210, Comparing to ChatGPT and Grok?

OpenChat-3.5-1210's rise in the AI landscape is marked by its significant 15-point increase in the HumanEval benchmark. This is not just a quantitative leap but a qualitative one, highlighting the model's refined coding capabilities.

Performance Benchmark of OpenChat-3.5-1210

Here is the Benchmark graph, comparing to ChatGPT and Grok (developed by Elon Musk's X.AI):

Additional data for model coparison:

| Model | License | # Params | Average | MMLU | HumanEval | MATH | GSM8K |

|---|---|---|---|---|---|---|---|

| OpenChat 3.5 1210 | Apache-2.0 | 7B | 60.1 | 65.3 | 68.9 | 28.9 | 77.3 |

| OpenChat 3.5 | Apache-2.0 | 7B | 56.4 | 64.3 | 55.5 | 28.6 | 77.3 |

| Grok-0 | Proprietary | 33B | 44.5 | 65.7 | 39.7 | 15.7 | 56.8 |

| Grok-1 | Proprietary | ???B | 55.8 | 73 | 63.2 | 23.9 | 62.9 |

Now, here's a brief section with bullet points and formatting:

Understanding the Benchmarks: A Snapshot

- OpenChat 3.5 1210 outperforms its competition across several benchmarks:

- Average score: Stands at 60.1, indicating overall superior performance.

- HumanEval: With 68.9, it showcases leading capabilities in code generation tasks.

- GSM8K: A high score of 77.3 reflects exceptional problem-solving skills.

- Grok-0 and Grok-1: Despite having more parameters, they fall behind in key areas:

- MATH: OpenChat’s score of 28.9 dwarfs Grok-0's 15.7.

- Overall: Grok models exhibit lower average scores, with 44.5 and 55.8 respectively.

- OpenChat commits to open-source with an Apache-2.0 license, contrasting with Grok's proprietary status.

What Does These Benchmarks Mean?

Here are our assessments on this data:

- OpenChat Dominates HumanEval Benchmark: This is crucial as HumanEval assesses a model's ability to comprehend and execute real-world programming tasks. Achieving high scores here means the model can generate code that is not only syntactically correct but also logically robust.

- Developers can leverage this model for a more nuanced and reliable coding assistant, streamlining workflows and potentially reducing the time spent on debugging.

- Users will experience more coherent and contextually aware interactions, whether they are engaging in casual conversations or seeking detailed explanations on complex topics.

Comparing OpenChat-3.5-1210 with ChatGPT and the Grok series reveals a clear edge for the former. The radar charts translate into a compelling story of OpenChat-3.5-1210’s superior performance.

What is Humaneval, and What is TruthfulQA?

For people who are curious about what these benchmarks mean, here are more detailed explanations of each benchmark:

- GSM8K: This is a benchmark that evaluates a model's general problem-solving ability. A higher score in this metric indicates a model's superior capability in reasoning and understanding complex queries.

- MT-Bench: Typically, this benchmark tests the model's performance in machine translation tasks. It's a measure of how well a model can understand and translate languages.

- HumanEval: This benchmark measures a model's programming ability, specifically its proficiency in generating correct and efficient code snippets in response to problem statements.

- BBH MC: The BBH MC (Multiple Choice) benchmark assesses a model's reading comprehension and ability to choose the correct option from a set of possible answers, which often requires an understanding of nuances in human language.

- AGIEval: This benchmark likely evaluates a model's ability in tasks that are indicative of Artificial General Intelligence (AGI), such as common sense reasoning, causal reasoning, and more complex problem-solving.

- TruthfulQA: This benchmark assesses the model's ability to provide truthful and accurate answers. It's a measure of how well a model can discern fact from fiction and provide reliable information.

- MMLU: The MMLU (Massive Multitask Language Understanding) benchmark evaluates a model's understanding across a broad range of subjects and question types, indicating its general language understanding ability.

- BBH CoT: BBH CoT (Book of the House of Black and White Completions) likely measures the ability to continue given passages or complete stories in a coherent and contextually appropriate manner, reflecting the model's comprehension and creative generation skills.

Step-by-Step Guide to Run OpenChat LLM Locally

Requirements for Running OpenChat Locally

To run the OpenChat trainer on your local machine, you'll need to meet the following hardware specifications based on the model size:

For the 13B model:

- A setup with eight A/H100 GPUs, each equipped with 80GB of VRAM is necessary.

For the 7B model:

- You can either use four A/H100 GPUs with 80GB VRAM or eight A/H100 GPUs with 40GB VRAM.

Also, ensure that you have Python installed on your system. OpenChat requires Python 3.6 or newer. You will also need pip for installing Python packages and git for cloning the repository.

Step 1: Install OpenChat

Open your terminal and install OpenChat using pip. This command installs the latest version of OpenChat along with its dependencies:

pip install openchat

Step 2: Verify Installation

To verify that OpenChat has been installed correctly, you can run the following command:

python -m openchat --version

This should return the version number of OpenChat, confirming that the installation was successful.

Step 3: Running the Model

With OpenChat installed, you can now run the model. By default, OpenChat provides a simple CLI to interact with the model directly in your terminal. Use the following command to start a conversation:

python -m openchat

You will be prompted to choose a model and then can start chatting right away.

Step 4: Advanced Options

For more advanced usage, such as specifying a particular model or running a server, you can use additional command-line arguments. Here’s how to run OpenChat with a specific model:

python -m openchat --model "openchat/openchat-3.5-1210"

Step 5: Running as an API Server

If you plan to integrate OpenChat with other applications, you can run it as an API server:

python -m openchat.serving.openai_api_server --model "openchat/openchat-3.5-1210"

This command will start a local server that listens for requests on the default port.

Additional Platforms to Run OpenChat 3.5 1210

Additionally, you may also try OpenChat 3.5 1210 on these platforms:

- Try OpenChat-3.5-1210 Free with Anakin AI.

- Try OpenChat-3.5-1210 on HuggingFace

- Try the model with Together AI’s Inference API

Conclusion: Envisioning the Future with OpenChat-3.5-1210

The success of OpenChat-3.5-1210 hints at a future where LLMs could offer even more personalized and context-aware interactions, anticipate needs, and provide solutions with little to no human input. From powering sophisticated virtual assistants to providing on-the-fly programming support, the potential applications are boundless.

This is not just an invitation to witness AI’s evolution—it’s a call to be a part of it. OpenChat-3.5-1210 is more than a tool; it's a harbinger of AI's boundless future, waiting for you to unlock its potential. So, go ahead, test the limits of this model, and be a part of shaping the narrative of tomorrow's AI landscape.